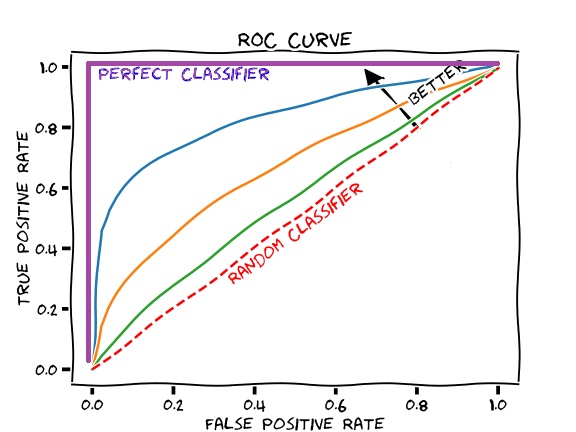

Today I reran the experiments from yesterday with the dataloaders for the OOD performance evaluation having equal in- and out-loader sample sizes. Theoretically, this would lead to a more accurate AUROC metric. However, just glancing at a visualization of the new results, it appears that we are achieving the same interesting results as yesterday. Unsure of the underlying reason why, I hope to plot the metrics we calculated on the same set of axis to get a better representation of the results.

Today we gave our final presentations, and everyone did a great job. I would like to thank everyone who helped me with this amazing experience! I'm very thankful to have had the opportunity to work on such interesting research with such amazing people this summer.

Comments

Post a Comment